Since (back in 1941) computers took shape they first filled entire rooms, then office desks, then pockets. But they all had one thing in common: they were designed by human minds. Over the years, many people have wondered: what if computers design themselves?

One day soon, an intelligent computer could itself create a machine much more powerful than itself. That new computer would probably in turn make another even more powerful one, and so on. Artificial intelligence travels an exponential upward curve, reaching heights of cognition inconceivable to humans. This, in one word, is the technological singularity.

Technological singularity

The term “technological singularity” dates back more than 50 years, when scientists were just starting to tinker with the binary code and circuits that made basic computation possible. Even then, the technological singularity was a stunning and formidable concept.

A new generation of super-intelligent computers could revolutionize everything. From nanotechnology to immersive virtual reality to superluminal space travel.

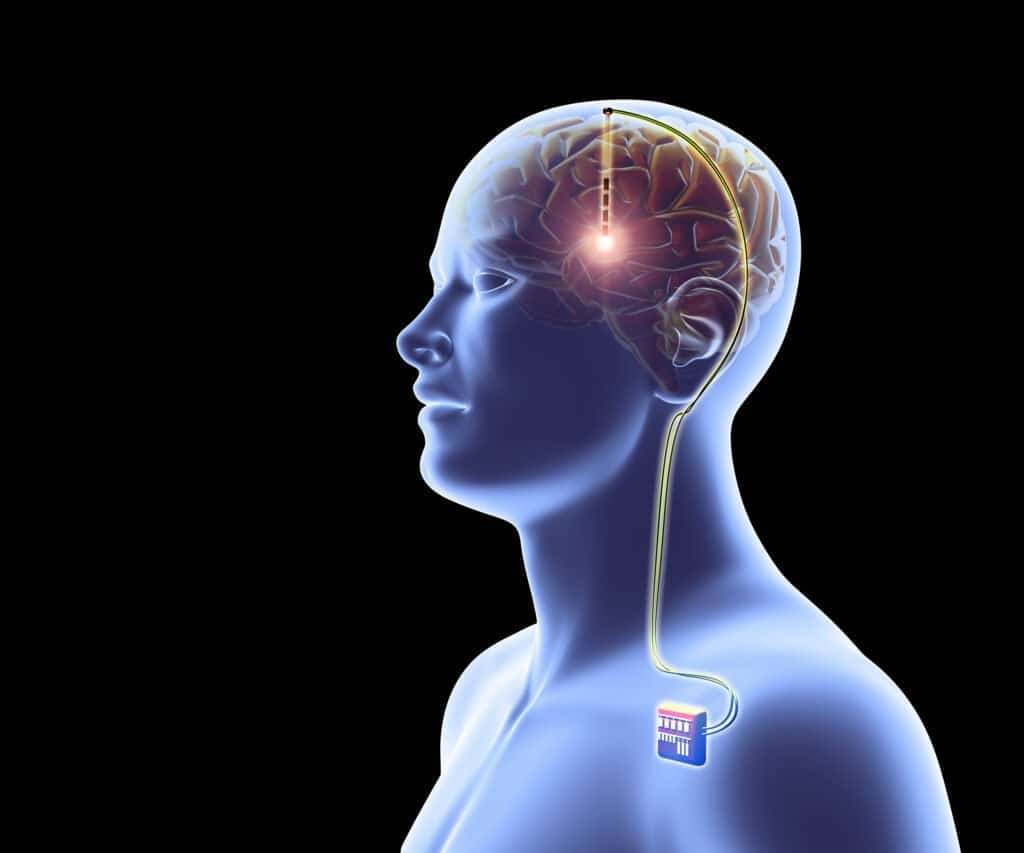

With the technological singularity, instead of just exploiting our biologically based brain, we could use artificial intelligence, interface with it. Improve or increase the performance of our brain with implants, or even digitally upload our minds for survive our bodies.

The result of the technological singularity would be a supercharged humanity, capable of thinking at the speed of light and free from biological concerns.

A totally new world

The philosopher Nick bostrom he thinks this dynamic world could bring a whole new era.

In this world we would all be more like children in a giant Disneyland run not by humans, but by machines that we have created, or that they have created themselves.

Nick bostrom, philosopher, writer and director of the Future of Humanity Institute at Oxford University

The starry sky above me, the moral law inside my processor

The technological singularity could take us into a utopian fantasy or a dystopian nightmare. And Bostrom knows this well. He has been thinking about the emergence of superintelligent AI for decades and knows inside out the risks that such creations entail.

There's the classic sci-fi nightmare of a robot revolution, of course, in which machines decide they'd rather be in control of the Earth.

What's even more likely, though, is the possibility that a superintelligent AI's moral code, whatever it may be, simply doesn't align with ours.

An AI responsible for fleets of self-driving cars or the distribution of medical supplies could wreak havoc if it no longer valued human life the same way we do.

The AI alignment problem, as it is called, has taken on a new urgency in recent years, thanks in part to the work of thinkers like Bostrom.

Technological singularity and differences of thought

If we are unable to control a superintelligent artificial intelligence, our fate may depend on whether future machine intelligence thinks like us. On this front, Bostrom reminds us that efforts are underway to “design AI so that it actually chooses things that are beneficial to humans, and chooses to ask us for clarification when it isn't clear what we mean. “

There are ways we can teach human morality to a nascent superintelligence. Machine learning algorithms could be taught to recognize the human value system, just like today GAN they are trained on a database of images and texts. Or, several AIs could argue with each other, under the supervision of a human moderator, to build better models of human preferences.

A question of respect

If the technological singularity were to create no longer simple machines, but real artificial thinking minds, we should also consider the ethical aspect. It would become, says Bostrom, a necessity to ask ourselves whether it is right to influence them, and to what extent.

In this era of conscious machines, in summary, the technological singularity could impose a new moral obligation on human beings: to treat digital beings with respect.