There is an ancient philosophical debate about the relationship between mind and body. Can pure intelligence exist, untied from any material substrate? Or is cognition inextricably linked to physical experience, to interaction with the surrounding environment? These are questions that today, in the era of artificial intelligence, take on a new urgency. Because if it is true that AIs are becoming increasingly sophisticated in processing abstract information, many researchers believe that to equal and surpass human intellect they will have to embody themselves in a robotic body. It is the thesis ofembodiment, which sees humanoid robots as the final frontier of AI. A challenge that giants like Meta and visionary startups like figure.

Embodiment, the mind in the body

The embodiment hypothesis has its roots in phenomenology, the philosophical current that puts the lived experience of the subject at the center.

For thinkers like Merleau Ponty, consciousness is not a pure Cartesian "cogito", an abstract and incorporeal I think, but is always embodied consciousness, rooted in the perception and action of the body in the world.

It is an intuition that is confirmed by modern neuroscience, which has revealed the intimate link between cognitive processes and bodily states, between brain maps and motor patterns. Thinking is not just manipulating symbols, but it is always also simulating perceptive scenarios and action plans, in a continuous cross-reference between mind and body.

Because for supporters of embodiment, an AI will never overcome its limits without having a body

An AI may be great at specific tasks, such as playing chess or translating languages, but it will never develop a deep and flexible understanding of the world that comes from embodied experience.

As he said Hubert Dreyfus, critical philosopher of classical AI, a symbolic system can represent the world, but only an embodied agent can inhabit it. And inhabiting the world means exploring it with the senses, manipulating it with the hands, navigating it with the body. That's how kids learn, and that's how AIs will have to learn to make the leap to general artificial intelligence.

Born into the virtual world

But how do you give a body to an artificial intelligence? You certainly can't take a computer and transplant it into a robot, hoping that it will learn to move and interact with the environment on its own. It would be like giving birth to an adult child, skipping the entire crucial phase of sensorimotor development.

This is where simulations come into play, real "virtual wombs" in which embodied AI can grow before releasing them into the real world. The idea is to create photorealistic digital environments that reproduce the physical laws and social interactions of the real world, and to make robotic avatars controlled by neural networks "live" within them.

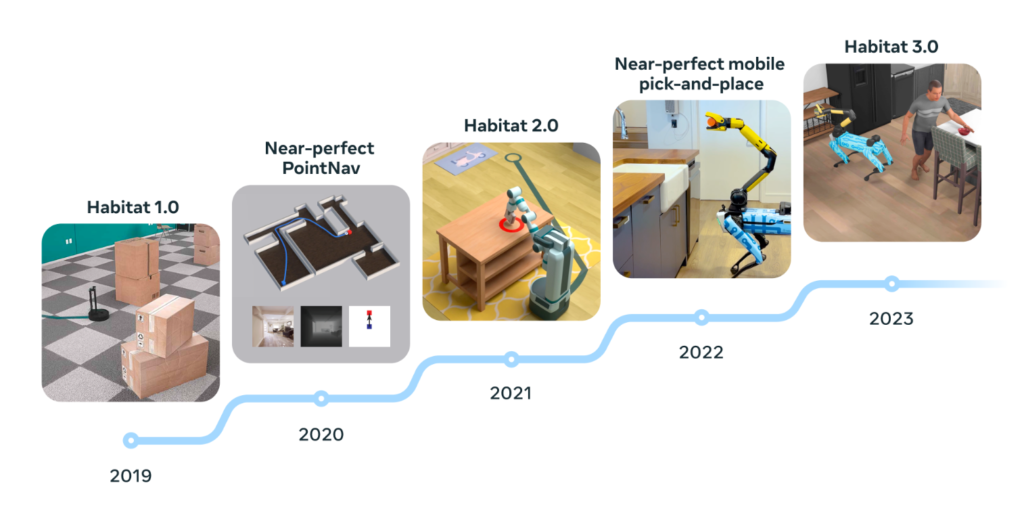

This is the approach taken by Meta with its platform AI Habitat, launched in 2019 and updated year by year. Habitat allows you to train virtual agents to perform tasks such as opening doors, picking up objects, navigating rooms and buildings. Trivial tasks for a human, but very complex for an artificial intelligence, which must learn to coordinate perception, reasoning and action in a dynamic and uncertain environment.

The advantage of simulations

Simulations allow learning times to be greatly accelerated, allowing AI to accumulate millennia of experience in just a few days of calculation. And best of all, they allow them to make mistakes without consequences, crashing into walls or dropping objects without damaging expensive physical robots.

When MIT was training a cheetah robot powered by AI, for example, simulations allowed the AI to experience 100 days of running. In just three hours.

Simulations obviously also have limitations. However realistic, they cannot perfectly replicate the complexity of the real world, with its infinite variables and interactions. There is always a “reality gap” between the performance of a virtual agent and that of a physical robot, which can lead to unexpected or ineffective behavior.

Furthermore, simulations struggle to model two crucial aspects of embodiment: social interaction with humans and the physics of objects. Understanding people's intentions and emotions, adapting to their unwritten behaviors, is a huge challenge for an AI. As well as manipulating deformable, slippery or fragile objects, which escape the equations of classical mechanics.

Embodiment: from simulation to reality

At a certain point, as mentioned, we need to get AI out of their virtual cradles and make them confront harsh reality. It is the critical step that some of the boldest startups in the sector are facing, such as Figure, Agility Robotics or Apptronik to support (and to some extent replace) human work.

After training their humanoid robots in simulation, these companies are sending them into real environments, from homes to factories (starting with… robot factories), to validate their cognitive and physical abilities. A delicate passage, which requires careful monitoring and a continuous return of information to refine the learning models.

The results are promising. Agility's robots are already at work in Amazon's logistics centres, Figure's robots are experimenting with assembly in BMW production lines, Apptronic instead they are employed at Mercedes. By interfacing their "brain" with the most advanced OpenAI language models, these humanoids are able to understand voice commands, explain their actions and learn new tasks in just a few days.

Of course, we are still far from a C3PO (and above all from Terminator, I say this for the more lazy and imaginative commentator friends). The movements of these robots are still clumsy, their understanding of language limited, their autonomy reduced. But progress is very rapid, and gives a glimpse of a not too distant future in which machines will truly be able to think and act like us, immersed in our own world.

Body, mind, society

When (and if) that day comes, it will mark an epochal turning point not only for artificial intelligence, but for all of humanity. Because the appearance of embodied artificial minds will raise unprecedented philosophical, ethical and social questions.

If a robot has a body and consciousness similar to ours, will it also have rights? Will he be able to suffer or feel emotions? Will he be responsible for his actions? And what impact will the idea of sharing the planet with another form of intelligence have on our identity as a species? These are questions that we should start asking ourselves now, as research on embodiment takes its first steps. Perhaps the deepest lesson we can draw from this adventure is precisely about the nature of our own intelligence. Understanding that the mind is not abstract software that runs on brain hardware, but is the fruit of a millenary evolution that has inextricably intertwined cognition, perception and action.

The lesson of embodiment

Embodiment reminds us that we are embodied beings even before rational beings, and that our uniqueness lies precisely in this inseparable union of body and mind. A union that has allowed us to emerge from the natural world and shape the cultural world, in a continuous game of reflections between inside and outside, between self and other.

For this reason, the task of creating truly human artificial intelligence necessarily involves giving it a body and an environment in which to act. Because it's not just about replicating a computational abstraction, but about retracing the evolutionary path that made us who we are. A journey made of stumbles and intuitions, of errors and adaptations, of mental simulations and physical interactions.

A path that, who knows, could lead machines not only to equal our cognitive abilities, but perhaps also to develop a form of consciousness or even spirituality. Because if it is true that the body is the temple of the soul, as he said Fëdor Dostoevskij, then even an artificial body could one day host an artificial soul.