What can an expressionless face reveal about your political orientation? Much more than you might imagine, according to a recent study that used artificial intelligence to predict political orientation from facial photos. Even when controlling for variables such as age, gender, and ethnicity, facial recognition algorithms were able to guess political beliefs with accuracy comparable to that of humans. But what are the implications of this discovery for our privacy in the digital age?

Facial recognition: the good and the bad

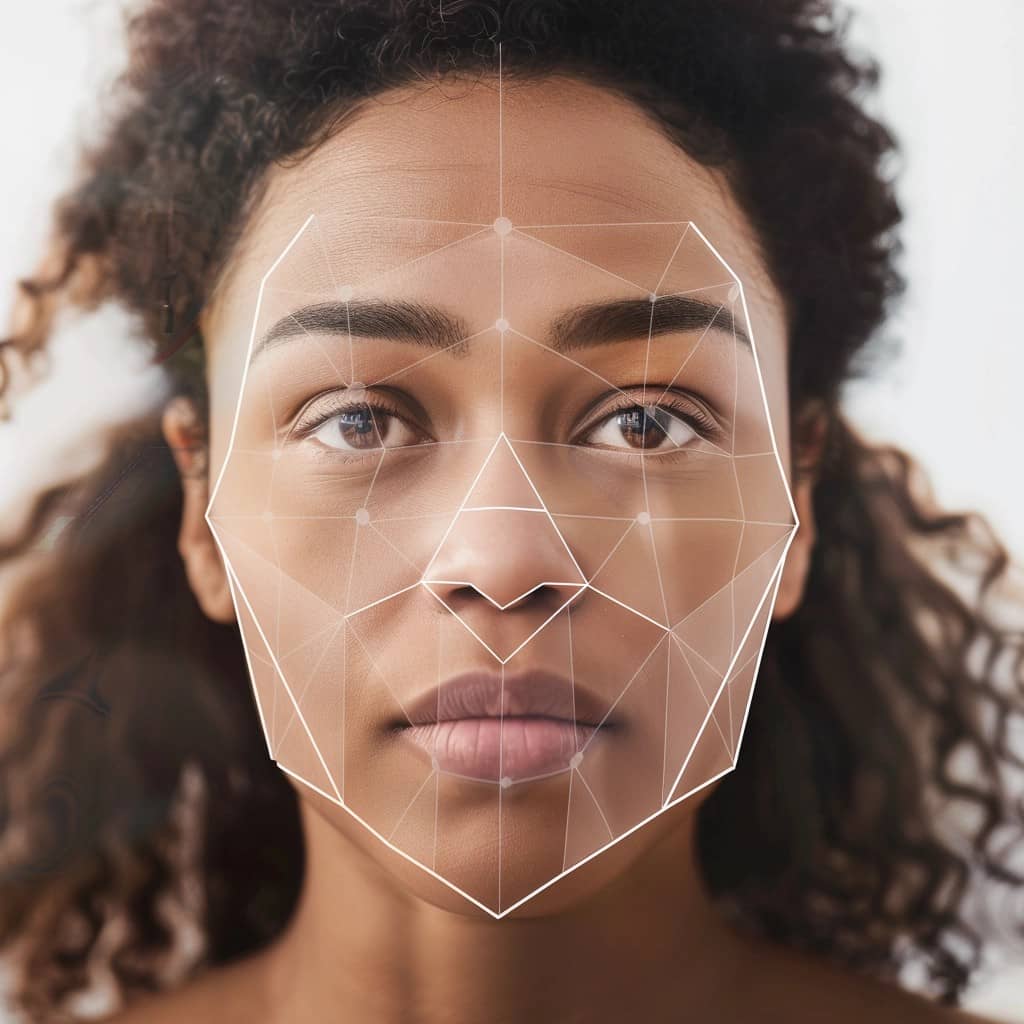

Il facial recognition is a form of artificial intelligence that identifies and verifies individuals by analyzing patterns based on their facial characteristics. Essentially, the technology uses algorithms to detect faces in images or videos. Then measure various aspects of the face, such as the distance between the eyes, the shape of the jaw and the contour of the cheekbones.

These measurements are transformed into a mathematical formula, a “facial signature”, which can be compared against a database of known faces to find a match, or used in various applications ranging from security systems to unlocking phones to tagging friends on social media.

With the increasing use of facial recognition technologies in both the public and private sectors, the possibility increases that these tools will be used for purposes beyond simple identification, such as predicting personal attributes. For example, and this is the case, political orientation.

Predicting political orientation: The study

In their new study published in the journal American Psychologist (I link it here), researchers led by Michal Kosinski, associate professor of organizational behavior at Stanford University, followed a particular lead. The target? Isolating the influence of facial characteristics alone in predicting political orientation. The work focused on variables such as expressions and head orientation.

To do it, they recruited 591 participants from a large private university and took their photos in a highly controlled environment. The subjects all wore black T-shirts, had removed their makeup, and had their hair pulled back. The photos were taken in a fixed position, in a well-lit room and against a neutral background.

The images were then processed by a facial recognition algorithm, which extracted numerical "facial descriptors". These descriptors encode facial features into a computer-analyzable form. And they were used to predict participants' political orientation through a model that mapped them onto a political orientation scale.

AI guesses political orientation better than humans

The results? They are amazing. The facial recognition algorithm was able to predict political orientation with a correlation coefficient of 0,22. Although modest, this correlation is statistically significant and suggests that some stable facial characteristics may be linked to political orientation, independent of other demographic factors.

But that's not all. In a second step, the researchers replaced the algorithm with 1.026 human raters, by asking them to estimate political orientation from the same standardized images. Surprisingly, humans were also able to predict political orientation with accuracy comparable to that of the algorithm. With a correlation coefficient of 0,21. A little less well, in short.

Finally, in a third phase, the researchers applied the model to a set of images of politicians, to validate the results in a more real-world scenario. Again, the facial recognition model was able to predict political orientation with significant accuracy. Demonstrating that some of the predictive facial features can also be identified in more diverse images.

Future implications: an unprecedented threat

The findings of this study raise significant privacy concerns. Facial recognition can be used without the subjects' consent or knowledge.

Facial images can be easily (and secretly) taken by law enforcement or obtained from digital or traditional archives, including social networks, dating platforms, photo-sharing sites, and government databases. They are often easily accessible: Facebook and LinkedIn profile photos, for example, can be viewed by anyone without the person's consent or knowledge.

Therefore, the privacy threats posed by facial recognition technology are, in many ways, unprecedented. And with the growing use of these tools in both the public and private sectors, the risk of abuse and mass surveillance becomes increasingly real.

The future of facial recognition: voting rights and face rights

The study of Kosinsky and colleagues opens a window into a future in which facial recognition technology could be used to reveal intimate aspects of our identity, such as political orientation, without our consent or knowledge. But it also raises many ethical questions and challenges.

How can we balance the potential benefits of this technology, such as improving security or personalizing services, with the risks to privacy and individual freedom? How can we ensure that these tools are not used for purposes discriminatory or oppressive, especially towards minorities and vulnerable groups?

There are no easy answers to these questions. But we must look for them as soon as possible, because the face we see in the mirror every morning is not just a set of biometric characteristics to be analyzed and classified. It is the reflection of our humanity, our individuality, our freedom of thought and expression.

And these are values that no algorithm, no matter how sophisticated, will ever have to control.