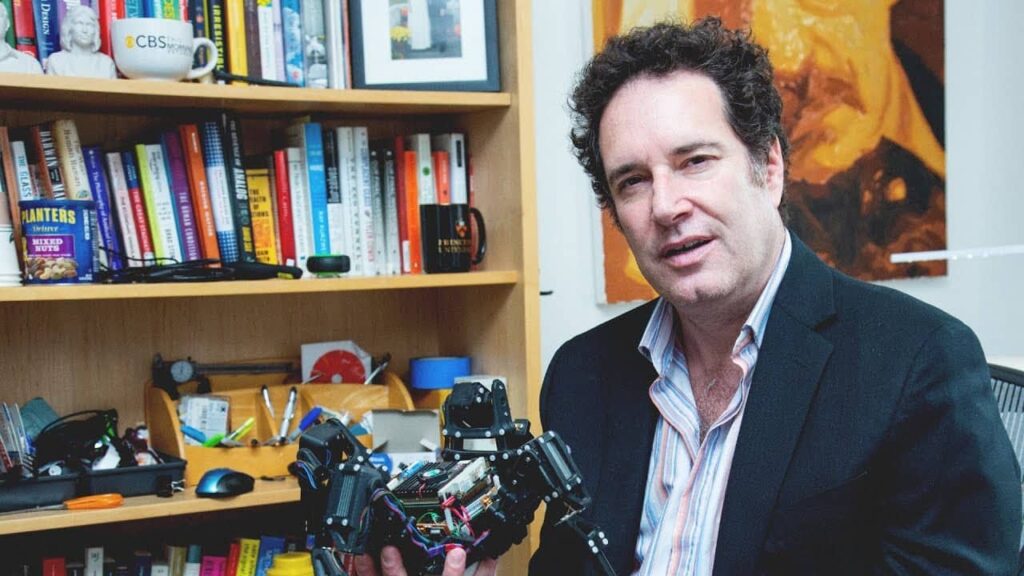

We may soon interact with a robot that not only understands human language, but can also predict and mirror emotions, and smiles just before we do. That's what they accomplished Hod lipson and his team at Columbia University with Emo. Things? He is a humanoid robot designed to make interactions with machines surprisingly more human. (If you want to go directly to learn more, take a seat here).

He sees, foresees and smiles

Emo, as mentioned, has the ability to predict a person's smile a second before it happens, then mirroring it on his own "face". A step forward in the imitation of human language, an advanced exercise in perception and interaction.

Although artificial intelligence has made significant progress in imitating human language, physical interactions with robots often clash with the phenomenon known as “valley of enchantment”, where the quasi-humanity of robots generates a feeling of rather uneasy what empathy. Emo, thanks to its ability to replicate human facial expressions, in particular the smile, could be the key to overcoming this obstacle, making interactions with robots perceived as more natural and less disturbing.

How does Emo work?

Emo is equipped with a series of high-resolution cameras set in his "pupils", and a flexible plastic skin supported by 23 separate motors controlled by magnets. It uses two neural networks: one dedicated to analyzing human facial expressions and the other to controlling one's own facial expression.

This combination of technologies allows the robot to anticipate and replicate expressions such as smiling with surprising precision.

Two more words on the method

Emo's learning process is similar to that of a human learning to recognize and replicate their own facial expressions in front of a mirror. The first neural network was trained on YouTube videos showing people in various facial expressions, while the second network learned by observing Emo himself expressing different emotions. This self-learning method gives Emo an almost human-like ability to interpret and reproduce facial expressions.

Now he smiles. And then?

Despite the excitement about Emo's potential, Hod Lipson and his team are aware that to make interactions with robots truly human-like they need to broaden the range of expressions that Emo can imitate. The next goal is to ensure that Emo can respond not only by mimicking facial expressions but also by reacting appropriately to the context of the conversation.

A challenge which, if overcome, could definitively change our relationship with technology.