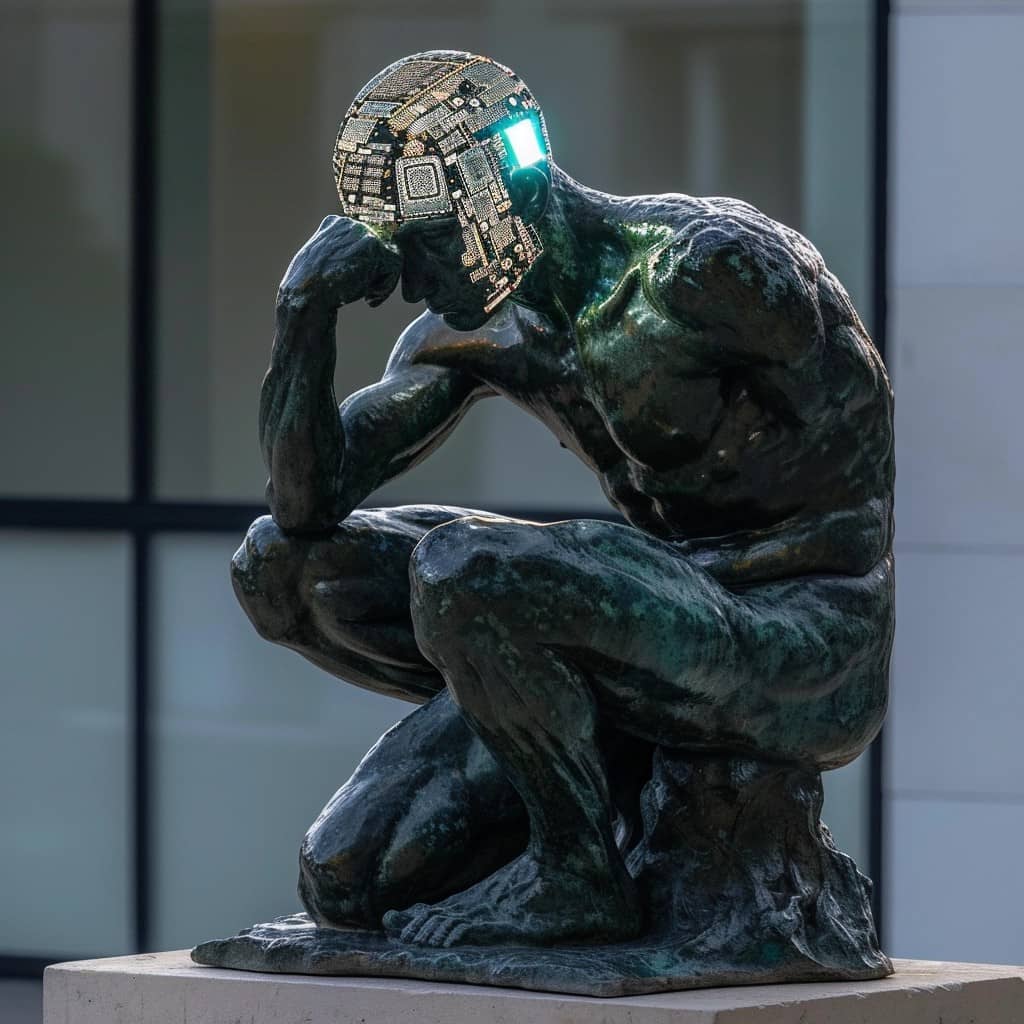

Think (it is worth saying) of an artificial intelligence that before answering a question stops for a moment to reflect, to think to itself, considering different possibilities and choosing the best one. “How human she is!” (cit.). This is what a group of researchers managed to achieve thanks to an innovative training method called "Quiet-STaR".

This technique, which provides AI with a sort of "inner monologue", is proving to be able to significantly improve the reasoning capabilities of artificial intelligence systems, opening up new, incredible perspectives for the future of this technology.

If AI learns to “think” before speaking

Until now, the most popular chatbots like ChatGPT have been trained to generate fluid and convincing responses, but without any real ability to “reason” about what they are about to say or to anticipate possible directions of a conversation. In practice, they are very good at giving answers that "sound good", but often lack logic, coherence and common sense.

With Quiet-STaR everything could change. This algorithm developed by Erik Zelikman and colleagues at Cornell University (you can find the paper here) does something new. It instructs the AI to generate a multitude of “internal reasoning” in parallel, before responding to an input. When it comes time to give an answer, the AI generates a mix of predictions, with and without reasoning, and chooses the best one (which can be verified by a human, depending on the type of question).

Finally, learn to "think" by discarding reasoning that has proven to be wrong. In practice, it is as if the AI is doing a “dress rehearsal” in its head before speaking, just like we humans do!

Let me think… QuietStar. Where have I heard this name before? IS THAT Q*?

In recent months the name Q*, or QStar started to circulate in relation to the "psychodrama" that occurred at OpenAI. An earthquake that he dethroned, and then put back in the saddle CEO Sam Altman (directed by Microsoft). QStar is the project that, according to some, had caused the "schism" in Open AI. A project that aims to develop artificial intelligence with reasoning, memory and contextualization capabilities similar to those of humans, positioning itself as an intermediate step towards the final goal of creating an AI with equal cognitive abilities and then superior to human ones, known as Artificial Superintelligence (ASI). Is this QuietStar an “offshoot” of Q*? We'll find out in the next few weeks.

A leap forward in reasoning (and mathematics)

The researchers applied Quiet-STaR to Mistral 7B, an open-source language model, and the results have been impressive. The “thinking” version of Mistral 7B achieved a score of 47,2% in a reasoning test, against the 36,3% of the “vanilla” version. Of course, he still failed a school maths test, with a paltry 10,9%. But hey, it's still almost double the starting 5,9%! A result that makes… Think.

Mind you, we are still far from bridging the gap between artificial neural networks and human reasoning capabilities. But Quiet-STaR seems to be a step in the right direction. Unlike other attempts to improve AI reasoning, which are very specific and not generalizable, this method can be applied “silently” to different types of models, regardless of the original training data.

The future of AI? An internal dialogue and a pinch of common sense

Apparently, the secret to smarter AIs is making them talk to themselves. One day we will have chatbots that, before answering our questions, will take a pause for reflection, perhaps saying: "Hmm, let me think for a moment...". And who knows, this internal monologue might lead to AI with a bit more common sense, capable of understanding the context, grasping the nuances, predicting the consequences of their words.

Of course, the road is still long. But research like this reminds us that intelligence, whether artificial or human, is not just a question of speed or computing power. It is also, and above all, a question of reflection, of reasoning, of the ability to put oneself in other people's shoes. Ultimately, what makes us human if not our ability to think, talk to ourselves, imagine future scenarios, learn from our mistakes?

While we wait for the machines to do it, let's start doing it again too. If there's one thing I learn from this news, it's that sometimes, to be smarter, you simply need to learn to think before you speak. And this, believe me, applies as much to circuits as to synapses.