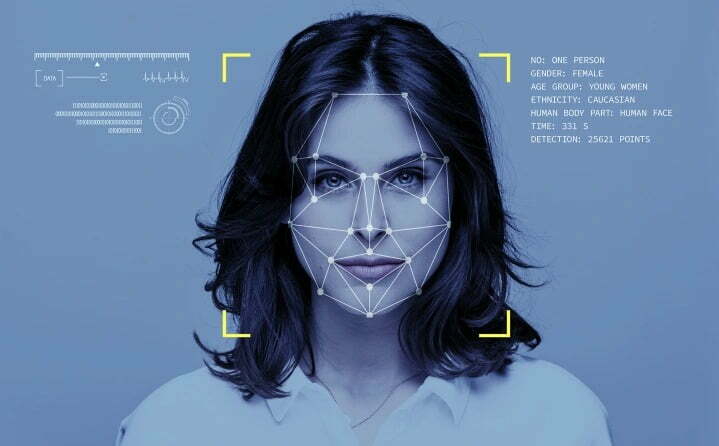

There is a parallel world in which every photo of us on Facebook ends up in the hands of the police, creating a virtually infinite file. Oh, no. It's not a parallel world: it's ours. It's really happening, by Clearview ai. For 6 years now, this controversial company has collected 30 billion photos from Facebook and other social media, without asking anyone for consent. The result? We are all potentially under the watchful eye of law enforcement, even if we have done nothing wrong.

The omnipresent eye

The CEO of Clearview AI, Hoan Ton-That, admitted to collecting the photos without users' consent. The reason? Create a huge database of facial recognition, useful for the police to identify those responsible for crimes and violent acts. However, the technology also poses serious privacy risks and has already led to wrongful arrests due to facial recognition errors. Privacy concerns have not gone unnoticed. Facebook, now Meta, already sent a cease-and-desist letter to Clearview in 2020, accusing the company of violating user privacy. From 2017 to today, the database has already been consulted over a million times.

You can't close the fence after the oxen have escaped. Once a photo ends up in Clearview's police database, those involved have little chance of removing it. Fragmented privacy laws across the planet and poor coordination between countries make it virtually impossible to “clean” the file, and thin out this giant cloak of surveillance.

Global Police State

In the last two years there have been many groups in defense of digital rights (above all Fight for the Future e Electronic Frontier Fund) to clamor for a ban on the use of Clearview AI by law enforcement. Nonetheless, massive use continues, complete with federal tests: who authorized them?

Technology puts everyone under surveillance, even those who believe they have nothing to hide. Furthermore, laws can change over time, turning legal actions into illegal ones and putting people at risk of arrest or social marginalization, even retroactively. The mechanism adopted by the American company is so pervasive that it "affects" even those who do not decide to put their photos on social media. Just be present in a photo with friends and the biometric fingerprint of your face ends up in the Clearview police file.

Beyond all control

In Europe we have "maybe" moved in time (although I don't know how long this "moratorium" will last). Elsewhere, such as in the US, the use of Clearview AI or other facial recognition technologies by police forces is not monitored or regulated.

This is a gigantic issue, guiltily underestimated even by the media, which is also so attentive to issues of digital rights when they include "fashionable" minuets on current issues. The widespread use of Clearview AI raises important questions about privacy, mass surveillance and civil rights, which must be addressed to protect people's freedom and safety.